Glenn Beamer, University of Virginia

ABSTRACT

Elite interviews have long been a staple of state politics research. Improved information technology facilitates the use of elite interviews, but also underscores the need for attending to their design, operationalization, and analysis. This essay provides a framework for developing, conducting, and interpreting elite interviews, and suggests means to enhance the validity and reliability of findings by considering instrumentation, sampling, data collection, and transcript analysis.

ELITE INTERVIEWS OFFER POLITICAL scientists a rich, cost-effective vehicle for generating unique data to investigate the complexities of policy and politics (Dexter 1970). These advantages are particularly appealing at the sub-national level due to the abundance of a d variation among subjects and the ready access to these subjects in the stat s. Given elite interviews’ ability to generate highly reliable and valid data, they have long been a staple of state politics research (Reeher 1996; Beamer 1999; Jewell 1982; Morehouse 1998; Jewell and Whicker 1994).

The utility and validity of information produced by elite interviews is dependent upon the analyst’s research design. Poorly prepared and unstructured interviews can yield poor information and funnel an inquiry away from the primary research focus to a respondent’s stream-of-consciousness thoughts and biased perceptions. To insure reliability, elite interviews must pay careful attention to question formats and wording, sampling, and the process of data collection and analysis. This essay addresses each of these issues, identifying ways to enhance the validity of elite interviews and the contribution they make to state politics and policy research.

THE PURPOSE AND FORMAT OF ELITE INTERVIEW RESEARCH DESIGNS

Elite interviews target people directly involved in the political process (Dexter 1970). These individuals may have special insight into the causal processes of politics, and interviewing them permits in-depth exploration of specific policies and political issues. The resulting information offers not just the potential for a richer description of political processes, but also for more reliable and valid data for inferential purposes.

Elite interviews are a tool to tap into political constructs that may otherwise be difficult to examine. Especially important are constructs involving the beliefs of political actors. For example, how do legislators conceive of representation, the extent of bipartisan and institutional cooperation within state government, political and party leadership, coalition building, and the power and influence of executives? These are just some questions that have been explored by state politics scholars using elite interviews (Loepp 1999; Jewell 1982; Morehouse 1998).

After determining that elite interviews should be part of the systematic search for an answer to a research question, an elite interview research design should be systematically developed :and executed in four basic steps:

- Identify the constructs of interest and develop observable measures and instrumentation to tap into them

- Develop sampling procedures to maximize the validity of the study

- Conduct interviews and collect corroborative data

- Analyze data

CONSTRUCTS AND QUESTIONS

The initial step in an elite interview research design is to define the concepts one needs to measure to answer the research question. Once the researcher has a clear understanding of these concepts, he or she then decides what type of person will be interviewed and prepare a valid instrument, that is, a question or series of questions that tap into the concept. Who the generic interviewee will be depends upon the research question and, most importantly, who has the information the researcher wants. If the question is as clear as, say, assessing partisan or ideological differences in policymaker orientations towards income redistribution, the obvious interview subjects are state legislators. The interview subjects could be any type of actors directly involved in the political or policy process who have the desired information. Administrators, elected members of the executive branch, and party officials might be good subjects for questions dealing with bureaucratic discretion, the political power of agency heads, or the consistency of policy agendas in state political parties, respectively. The key point is that the target population must be determined by the research question.

Whoever is interviewed, insuring that the instrument yields valid responses can be a vexing problem. The need to measure abstract concepts often attracts researchers to the elite interview approach in the first place because these concepts are difficult to capture with other approaches (Carmines and Zeller 1979). The problem is that concepts such as distributive justice and philosophies of representation can be confusing and alien to a respondent (Reeher 1996; Jewell 1982) Rather than explaining such constructs to respondents overtly, a better strategy is to develop an instrument that poses questions that bring these underlying dimensions into relief. Using academic jargon can put off respondents, biasing responses and making interviews counterproductive. Asking questions in the language of the respondent avoids this problem. If the questions tap into the target construct validly, responses to them should converge in predictable patterns.

Two important issues need to be addressed in constructing a valid elite interview instrument: convergent and discriminant validity. Convergent validity has to do with whether a respondent has a consistent orientation toward the construct of interest. Evidence of convergent validity is an overlap in responses to questions that are designed to tap the same concept, but that have different sources of variation beyond the construct of interest (Judd, Smith, and Kidder 1991). This might be achieved by designing questions to assess the convergence of responses on the same construct in multiple policy areas. For example, questions about charter schools and pollution credits could both tap into views about the underlying construct of market competition in public policy, but in very different contexts. Responses to these questions would enable a researcher to discern the consistency of elite views on this issue.

Questions that help eliminate possibilities and thus focus in on the construct of interest in an instrument enhance discriminant validity (Judd, Smith, and Kidder 1991). For example, a state senator may say she opposes a proposal to raise state income taxes to fund local school districts. This response may reflect the senator’s attitudes toward income distribution (opposition to a progressive income tax), or it may reflect her attitude toward intergovernmental relations ( the appropriate role of the state in funding local schools). A follow-up question asking whether the senator supports a proposal to enhance local school funding with a sales tax could help eliminate the latter possibility, and by doing so provide discriminant validity for the instrument’s assessment of the construct.

Carefully crafting an instrument with an eye toward both convergent and discriminant validity provides elites unambiguous opportunities to indicate their positions on the construct of interest without interference from other constructs. And, of course, regardless of the final form it takes, pre-testing the interview instrument is critically important to identify potential problems such as unclear questions, questions multiple interpretations, and key words that may serve as a basis for coding in subsequent analysis.

SAMPLING

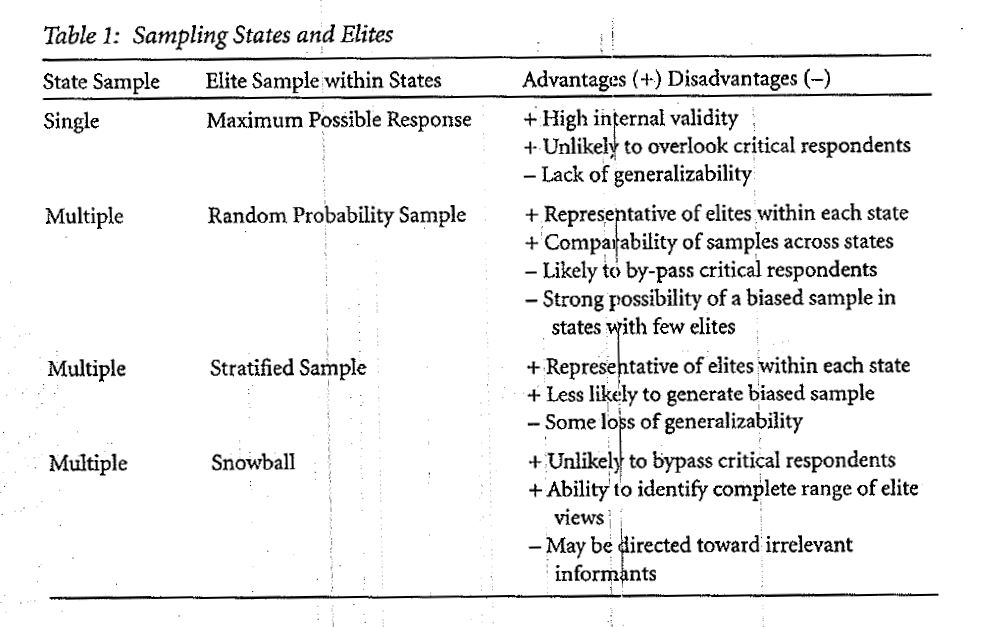

There are three basic research designs that incorporate elite interviews in state politics research: single-state studies with multiple observations, multiple-state studies with single observations, and multiple-state studies with multiple observations. Each of these offers its own mix of relative strengths and weaknesses. The appropriate design for a given study depends on how these strengths and weaknesses interact with the study’s type of respondent, research question, and the researcher’s budget.

The advantages of single-state studies are that they can have very high internal validity, and that they are unlikely to overlook critical respondents (Reeher 1996; Dexter 1970). These advantages depend heavily on achieving a large sample size, so the researcher should always try to maximize the number of observations. The low cost of single-state studies relative to multiple-state studies facilitates this. Elites can constitute a fairly small group in a single state, and sometimes a researcher can come close to canvassing the entire target population. For example, Reeher (1996) interviewed 97 percent of the Connecticut Senate in his investigation of legislators’ views of distributive justice. While such a sample-to-population ratio is not always possible, the higher the ratio, the more the advantages of a single state study are maximized.

Multiple-state studies help overcome the single biggest disadvantage of the single-state design-the lack of generalizability. Multiple-state studies require a two-stage sampling procedure. First, a researcher must create a sample of states. A valid sample is one that represents the cases in the target population well. With large populations and sufficient resources, this can be done with a random probability sample. However, because with a small target population (50 states) a random sample can be biased easily, most researchers sampling states will use a deliberate sampling strategy to ensure that their interviews capture the range of elite views across states.(1) One approach might be to select two states that represent the outer bounds of a dimension in which the researcher is interested and a third state that represents the mode on this dimension. Another strategy is for the researcher to identify classes of states ( e.g., types of political culture, region, size, etc.), and select a state or two from each class as a representative of it. Ultimately, if a researcher conducts interviews in a variety of states and contexts and finds consistent results, his or her claim for the generalizability of his or her results is bolstered (Cook and Campbell 197).

Although there are legitimate statistical concerns about selecting cases on the dependent variable (King, Keohane, and Verba 1994), there are practical advantages to sampling states on the basis of variation in the political or policy outcomes of interest to a study (Beamer 1999; Dion 1998). For example, by selecting states in which proposed tax increases failed and succeeded, a researcher can gain insights into the necessary political conditions that must be present for a tax increase. Even with very few states in a sample, the researcher may be able to estimate the conditional probability of a tax increase given a state’s political characteristics, at least qualitatively (Dion 1998).

Once the sample of states has been selected, the second step is to create samples of the targeted elites with in the selected states. If the target is a single actor ( e.g., the governor), this will consist of a single observation in each state. For large target populations, a within-state sampling strategy must be developed. Assuming the elite population can be defined conceptually, advances in information technology have eased considerably the practical problem of identifying specific members of state elite target populations. On-line directories of state officeholders and web sites for state legislative and executive bodies, for example, reduce considerably the resources needed to identify respondents in a target population. There are a number of options for creating within-state samples systematically. If a researcher’s primary interest is to leverage the comparative advantages of state-level analysis and generalize to a particular group, a random probability sample should be, employed. Random probability samples are particularly appropriate when both the population and sample size are large (Henry 1990). This approach produces samples representative of with in-state elites and data that support comparisons of samples across states. The drawback of random probability sampling is that it may miss critical respondents and thus produce biased samples if the target population is small (Dexter 1970). The latter concern can be addressed through stratified sampling techniques. For example, a researcher may need to sample 30 respondents from an elite population of 300 that includes two distinct groups, one with 270 members and one with 30 members. By stratifying the population by these groups, the researcher can assure him or herself of a sample that represents the population, with twenty-seven respondents from the first group and three from the second. If the researcher wants more than three respondents from the smaller group, that group can be oversampled. These stratified sample options involve some loss of generalizability, but they reduce the risk of generating a biased elite sample (Dexter 1970).

Snowball samples can be used when a listing of the entire target population is unavailable, as is often the case with informal policy networks (Seidman 1998). This approach involves developing the sample by identifying potential respondents while interviewing respondents identified previously. Questions like, “Who else should I talk to about this?” or “Who was the main policy expert for the opposition?” can elicit such names. As the researcher progresses through this process with multiple respondents, a convergence of recommendations indicates that the sample is complete. Snowball sampling allows a researcher to garner a rich fund of information, often while conserving resources. The drawback is the potential for introducing bias into the sample, with the explicit trade-off being generalizability for depth of information. One way to reduce bias is to ask a respondent to identify activists whom they view as their opposition. Although this does not eliminate the bias toward issues focused upon by elites generally, it does help ensure a range of responses (Biernacki and Waldorf 1981; Beamer 1999).

COLLECTING DATA

After the questions are written and the sample has been selected, interviewing can commence. The researcher should ask for appointments well in advance, keeping in mind the need for time between appointments, and allowing enough flexibility for appointments to be rescheduled. Before beginning any interviews, the researcher must decide on procedures for transcribing and recording data. Although tape-recording can provide an accurate record of the interview, it can inhibit a respondent from being candid (Fenno 1986; Jewell and Whicker 1994; Kingdon 1989; Beamer 1999). Interviews that are not recorded should be transcribed as soon as possible to avoid memory loss that can lead to interviewer bias. A researcher should use a written release form to get permission from the respondent at the beginning of the interview to use the information collected ( Seidman 1998). These are usually provided or approved by an Institutional Review Board. A verbal release may also be used, though such agreement should be recorded on audio tape. The respondent should be told before commencing the interview whether his or her answers will be confidential or anonymously attributed. Without such assurances, a respondent may be less than candid.

In conducting interviews, the most common strategy is to move from general to more specific questions (Dexter 1970; Kingdon 1989; Seidman 1998). By asking broad questions at the outset, the interviewer offers the respondent the opportunity to volunteer perceptions and talk freely, while reserving the ability to ask more specific probes in reaction to initial responses. This allows the interview to stay structured and focused, but also gives the interviewer some flexibility. Alternatively, beginning an interview with specific questions has some advantages. For example, by beginning an interview with a question about a specific state policy initiative, the researcher may immediately convey to the respondent that he or she is knowledgeable about state politics. This may decrease the likelihood that respondents will give vague and general answers.

Interviewers should never ask elites for objective information that is accessible elsewhere ( e. g. , demographics, standing committee assignments, prior offices held, etc. ). Asking for such information wastes the respondent’s time and gives the impression that the researcher is unprepared. Since an open and unbiased flow of information depends on the respondent having confidence in and respect for the interviewer such an impression can undermine the validity of the entire interview. The researcher should come to the interview with this objective information, even including some of it in the questioning as appropriate to enhance the interviewer’s credibility with the respondent.

ANALYSIS

Regardless of whether interviews are being used for exploratory research or hypothesis testing, a researcher should develop a systematic and careful plan for analysis. Prior to analysis, a researcher should examine the interview data with a healthy skepticism as there are many potential sources of data contamination. For example, respondents may overstate their roles and contributions, and during the interview, the researcher is not in a good position to challenge these claims (Dexter 1970; Seidman 1998). To assess the validity of the data; the researcher should: identify any possible ulterior motives of each respondent; identify any potential reasons for censored responses, such as the presence of other people at an interview, assess whether a given respondent seemed too eager to please an interviewer and hence might have responded less than candidly; and identify any idiosyncratic features (such as interruptions). Some contaminants are unavoidable and it is important to have a clear picture of what they are and how they might influence data interpretation.

Document these problems as soon as possible after an interview by recording them parenthetically in interview transcripts.

In addition to being aware of the potential sources of data contamination, three techniques are used widely to assess the validity of the information collected from elite interviews (Beamer 1999; Dexter 1970; Seidman 1998). First, newspaper articles, lobbyists’ “fact sheets,” and legislative and administrative reports may provide supporting (or contradictory) evidence (if not outright verification) of a respondent’s version of events. Second, respondents with different biases and perspectives may confirm or contradict one another. For example, one might ask Republican officeholders about claims made by Democrats, ( without attributing authorship of these claims), about the consequences of a specific policy proposal. The Republicans might confirm the Democrats’ claims, or indicate why they might be incorrect. Third, interviewers can check the accounts and perceptions of their elite respondents with corroborative interviews with other observers of the process under study, such as government or legislative staff, reporters, and community and political activists. Again, these ¥interviews may verify or refute elite perceptions and accounts, allowing the researcher a more balanced understanding of the phenomenon being studied.

Once a researcher is convinced his or her data are valid, the analysis designed to answer the research question ,can begin. Initial steps in this process involve decisions on whether the analysis will begin with case or cross-case analysis and, if the latter, which interview subjects will be grouped together for the analysis (Pattotl 1990). For example, a single-case analysis may focus on multiple events or issues in each individual state ( e.g., legislative proposals for health reforms and education reforms), while a cross-case analysis will typically focus on a single issue or topic evaluated across states (Patton 1990).

Interview data are often analyzed with content analyses that reduce the transcribed interview from its text to summarized expressions about the subjects of interest to the interviewer (Seidman 1998). These analyses may be based on the frequency that respondents use certain keywords. If this is the case, the research protocol should state which keywords reflect a specific variable or construct and identify relationships among variables and constructs (Kingdon 1989; Schneider and Ingram 1993). If a research team is jointly coding interviews, particular care should be taken to insure inter-coder reliability. If working alone, a researcher should have a colleague review a subset of interviews to assess coding reliability, estimate an error rate, and identify problematic responses (Kingdon 1989).

The coding mechanisms should be identified and recorded for each interview for follow-up work and verification (Dexter 1970; Morehouse 1998). The interviews should be analyzed to identify instances in which a respondent perceived tension or a logical disagreement among variables, and which variable, or set of variables, dominated the individual’s thinking (Kingdon 1989 ). Other elements of the interview analysis could include whether or not a respondent volunteered information or whether it was solicited by the interviewer, whether one or more variables were relevant to a specific process or decision, and whether the respondent saw his or her view as being widely shared by his or her peers. Table 2 provides a guide for developing a coding protocol and interview analysis.

Several software packages are available that provide coding processes for content analysis of interviews, including Kwalitan, Oxford Concordance Package, and the Ethnograph. While the specifics of such packages are beyond the scope of this essay, a good starting point to learn about them is to access Text Analysis Resources on the internet at: http://www.intext.de/TEXTANAE.HTM#qual.

CONCLUSION

Research projects using elite interviews should start with well thought out instruments, target appropriate respondents, and follow through with systematic interview and analysis procedures. Rigorous attention to the instrumentation, sampling, and conduct of elite interviews provides opportunities to enhance the reliability and validity of data generated by them. When done properly, elite interviews offer a rich, cost-effective component in a research design that can produce a valid and unique data resource for state politics studies.

REFERENCES

Henry, Gary. 1990. Practical Samplinir Newberry Park, CA: Sage Publications.

Jewell, Malcolm E. 1982. Representation in State Legislatures. Lexington, KY: Universityof Kentucky Press.

Jewell, Malcolm E., and Marcia Lynn )Vhicker. 1994. Legislative Leadership in the American States. Ann Arbor, MI: University of Michigan Press.

Judd, Charles M., Eliot R. Smith, and ?-ouise H. Kidder. 1991. Research Methods in SocialRelations. 6th ed. Fort Worth, TX: Hblt, Rinehart, and Winston, Inc.

King, Gary, Robert 0. Keohane, and ·sjdney Verba. 1994. Designing Social Inquiry: Scientific Inference in Qualitative Researc?. Princeton, NJ: Princeton University Press.

Kingdon, John W. 1989. Congressmen’ Voting Decisions. 3,d ed. Ann Arbor, MI: University of Michigan Press.

Loepp, Daniel. 1999. Sharing the Balance of Power: An Examination of Shared Powering the Press.

Morehouse, Sarah McCally. 1998. The Governor As Party Leader: Campaigning and Governing. Ann Arbor, MI: University qf Michigan Press.

Patton, Michael Quinn. 1990. Qualitative Evaluation and Research Methods. Newberry Park, CA: Sage Publications.

Reeher, Grant. 1996. Narratives of Justice: Legislators’ Beliefs about Distributive Fairness. Ann Arbor, MI: University of MichJgan Press.

Schneider, Anne, and Helen Ingram. 1993. “Social Construction of Target Populations: Implications for Policy.” American folitical Science Review 87:334-47.

Seidman, Irving. 1998. Interviewing af Qualitative Research: A Guide for Researchers in Education and the Social Sciences. N w York, NY: Teachers College Press.

Wahlke, John C., Heinz Eulau, William Buchanan, and LeRoy C. Ferguson. 1962. The Legislative System. New York: John Wiley and Sons.

ABOUT THE AUTHORS

Glenn Beamer is Assistant Professor or Government and Health Evaluation Sciences at the University of Virginia